New and updated cybersecurity regulations for medical devices have been recently released, are in-process, or are presently under consideration in various markets worldwide.

While the need to assure the security of medical devices is long-standing, today, we are also facing new challenges, pressures, and opportunities accompanying the expansion of distributed healthcare delivery. Demand for distributed healthcare technologies has been rising for several years, but the dual need to minimize social contact and protect vulnerable populations because of the COVID-19 global pandemic is rapidly accelerating that demand.

However, with smarter devices that support greater remote functionality comes the potential for larger, more frequent, and more destructive cyberattacks — all of which helps explain the need for increased and better-clarified security regulations for medical devices.

Medical device developers are no strangers to pressure to decrease time-to-market for new devices: We swim those waters every day and we know the waves come from all sides. But to many developers, engineers, and manufacturers, embedded cybersecurity — the security domain applicable to everything from “Internet of Things”-capable coffeemakers to implantable pacemakers and neurostimulators — is unfamiliar.

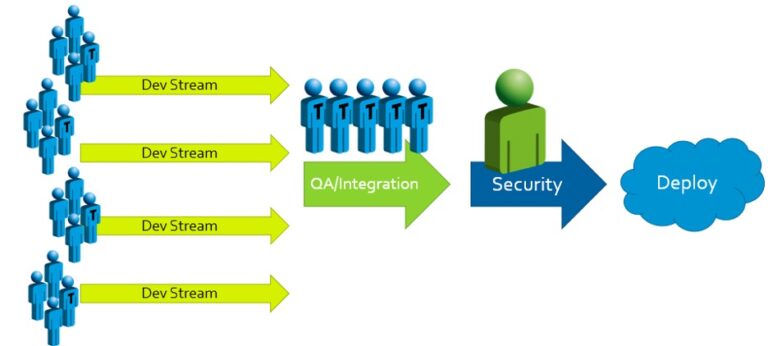

Traditional approaches to secure development often look something like this:

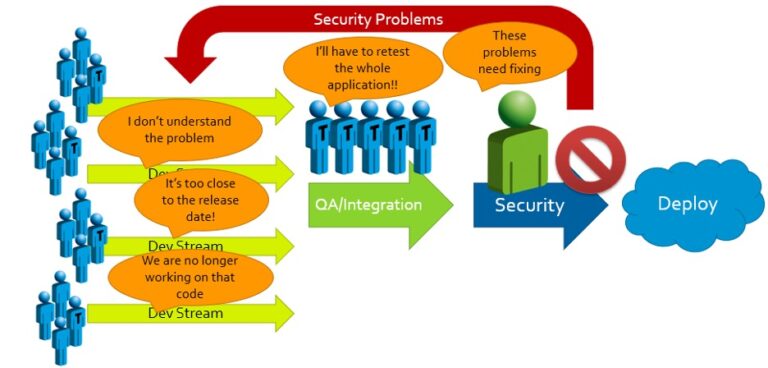

When security problems with potentially high impacts are found, the results and responses are predictable:

We’ve seen it time and again: When rigorous security evaluations are held until the final stage before product launch, the effort needed to secure the product becomes a massive roadblock. Management is forced to decide between:

(A) Accepting significant delays and unanticipated expenses to accommodate rework; or

(B) Passing off on an insecure product and attempting to release it to market.

And with updated cybersecurity regulations going into effect on a regular cadence, the increasingly well-informed security expectations held by potential customers, and the unignorable moral and fiscal responsibilities to patients and business shareholders, Option (B) is no longer available.

Fortunately, we’re not doomed to face Option (A)! There’s a better way: The Cybersecure Development Lifecycle (CDLC).

Some of the benefits of adopting the CDLC include:

- Confidence in meeting FDA expectations during premarket approval, thus avoiding needless redesign, rework, and testing. This reduces costs and improves the time to market.

- Protection of your valuable intellectual property, thereby avoiding inadvertently exposing it to your competition or letting others reap the rewards of your R&D without sharing in your investments.

- Avoiding negative impacts on your business model through the detection and prevention of counterfeit components or disposable accessories.

- Allowing your project managers to more accurately assess development progress by including cybersecurity testing as part of the process, instead of leaving it to become an expensive, unwanted surprise at the end of development.

- Gaining the ability to accurately answer customers’ pre-sales questions with the expected cybersecurity artifacts, thus increasing sales and overall market share, and boosting your reputation within the marketplace.

The Cybersecure Development Lifecycle approaches product development holistically. Instead of trying to shoehorn security in at the end, the CDLC integrates appropriate security activities into each step of development. With this approach, the results of security evaluations – scoped for each stage of the process – can immediately inform the next development sprint. The selection and assimilation of security solutions into the overall design take place concurrently with traditional development activities. Compliance artifacts (records and reports) are generated at designated intervals, which provides traceability to prove that security wasn’t a development “afterthought,” and that vulnerabilities were identified and addressed. This, in turn, assures regulators and purchasers – gatekeepers of medical device markets – that your device is secure, and therefore safe for deployment.

The essential elements of CDLC activities are:

- Design Vulnerability Assessment, a systematic formal approach to analyzing a proposed design, identifying its potential vulnerabilities, and scoring them so that mitigations and redesign work is informed by appropriate priorities;

- Implementation Activities, including fuzz testing, penetration testing, static analysis, and unit testing, all performed concurrently with development and scoped to each development team’s responsibilities and schedule; and

- Iterative Review and Collaboration, facilitating security outcomes from the previous sprint and cycle to immediately feed into the next sprint and cycle, which drastically reduces the risks to schedules and budgets posed by insecure designs and development practices, increases project management’s awareness of project progress, and assures compliance with standards and regulatory expectations.

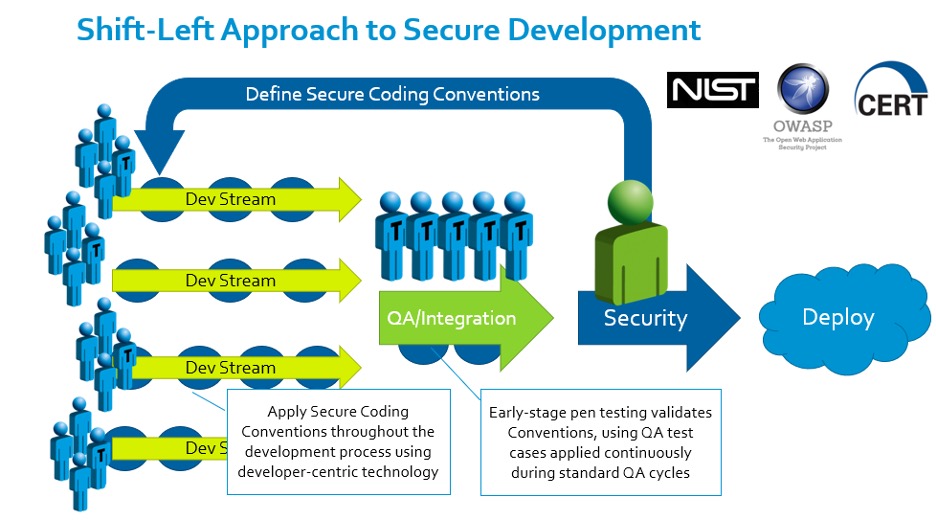

One of our partners in evangelizing the software side of the Cybersecure Development Lifecycle is Parasoft, whose automated testing tool enables the efficient development of compliant, manageable, high-quality, secure code. We like to call this the “Shift-Left” approach to secure development:

Image courtesy of Parasoft – automated software testing tools for security and quality assurance. Used by permission.

Informed by resources and standards from organizations like NIST, OWASP, and CERT, the project’s security team defines a secure coding convention. Automated tools enable developers to apply that convention smoothly within their existing rhythms, and early-stage penetration testing and fuzzing collates the results and drives improvement both to the software under development and the conventions being applied to it. The result is early detection of code vulnerabilities and the elimination of potential conflicts of interest, which might otherwise pit security requirements, team morale, and the development schedule against one another.

This example is just one part of the total benefit of adopting the Cybersecure Development Lifecycle. To learn more about the CDLC, consider grabbing a copy of Medical Device Cybersecurity for Engineers and Manufacturers, a comprehensive guide to secure development coming soon from Artech House. Another option is to follow the Root of Trust series on our blog or reach out to Chris directly via LinkedIn with specific questions. We’re always thrilled to help medical device manufacturers secure their designs and bring new, life-changing medical devices to market.

One of the tools in our kit is Parasoft Solutions, which we and many of our customers use to eliminate potential exploitable vulnerabilities in code under development by applying secure coding standards such as CERT (an example of the CERT C dashboard from Parasoft is below):

For more details, check out Parasoft’s blog on securing applications using CERT C.

As healthcare applications and medical devices become increasingly interconnected, securing and testing communication APIs becomes critical.

(More: Top 5 Must-Haves for API security, reliability and performance).

Velentium will soon be offering Parasoft training courses specifically for embedded software in medical device development. As part of the training, you will learn that successful technical leaders start with having a clear vision of project compliance with both internal and external security standards. The sooner teams understand the technical depth, the better they can manage the effort, quality, and time-to-market tradeoffs.

Reach out to us to learn more about upcoming Parasoft training opportunities, or to schedule a consultation with our cybersecurity team for a current or upcoming embedded development project.